There have been endless new AI model launches lately from startups and tech giants alike. Mistral just launched Mixtral 8x7B, Microsoft unveiled Phi-2, and soon after unveiling Gemini, Google has now announced Imagen-2, its most advanced AI image generator yet.

Google Deepmind’s Imagen-2 uses the commonly used “advanced text-to-image diffusion technology, delivering high-quality, photorealistic outputs that are closely aligned and consistent with the user’s prompt”, says the company’s blog post.

The new AI image generator promises accurate hands and faces down to the last detail while strictly following user prompts. Here is an example of Imagen-2 producing a photorealistic image.

The prompt used for this example was: “A shot of a 32-year-old female, up-and-coming conservationist in a jungle, athletic with short, curly hair, and a warm smile.”

Although Google does not have the best reputation for truthful demonstrations, the results are still quite impressive regardless.

Google was able to improve the image understanding capabilities of Imagen 2 by providing it with more descriptive captions on the images for its training data. This allows Imagen 2 to adapt to a range of labeling techniques, thereby acquiring a more intricate and varied comprehension of different prompts.

This is a technique similar to what OpenAI used to improve its current image generator model, DALL-E 3, with better prompt understanding. This technique also helps Imagen-2 gain a more sophisticated grasp of context and subtleties within prompts.

Google claims that the enhancements made to both the dataset and the model have led to significant progress in Imagen 2, particularly in aspects traditionally difficult for text-to-image systems. This includes the lifelike portrayal of human hands and faces. Furthermore, Google states that it has substantially minimized the usual imperfections typically found in images generated by AI.

To improve image quality, Google created an aesthetic model based on human preferences in different elements such as lighting, composition, exposure, sharpness, etc. Every image was given an aesthetic score so Imagen-2 could decide which photos were preferable to humans.

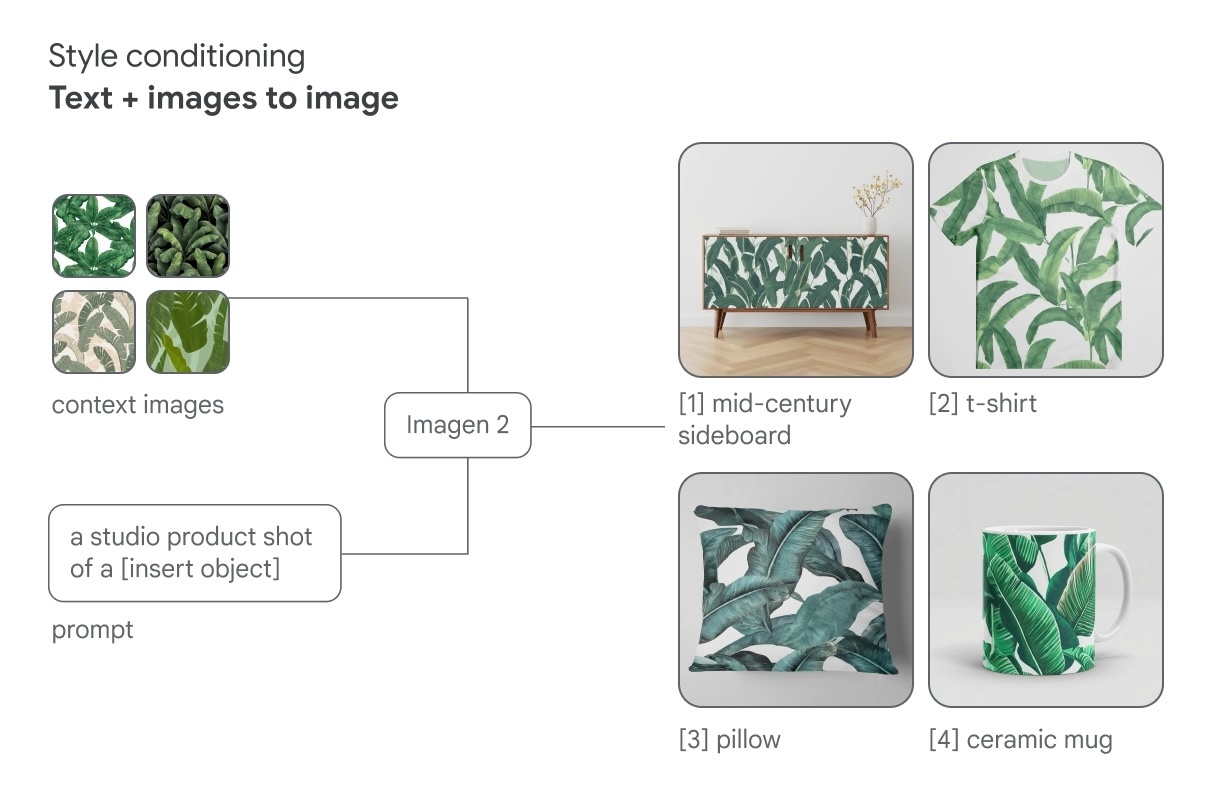

Google’s newest image model also allows easier manipulation and customization of an image’s style, such as incorporating reference images alongside textual descriptions.

Lastly, Imagen 2 comes with image editing capabilities like inpainting and outpainting. These functions enable users to add new elements to an existing image or to expand the image’s original frame.

Imagen 2 is accessible to developers and Cloud users through the Imagen API, which is integrated into Google Cloud’s Vertex AI platform.