Mixtral 8x22B, the latest language model from French AI startup Mistral, is now the new leader among open-source AI models, as per the company’s claims. According to Mistral, the new 8x22B model is ahead of its rivals in terms of both performance and efficiency.

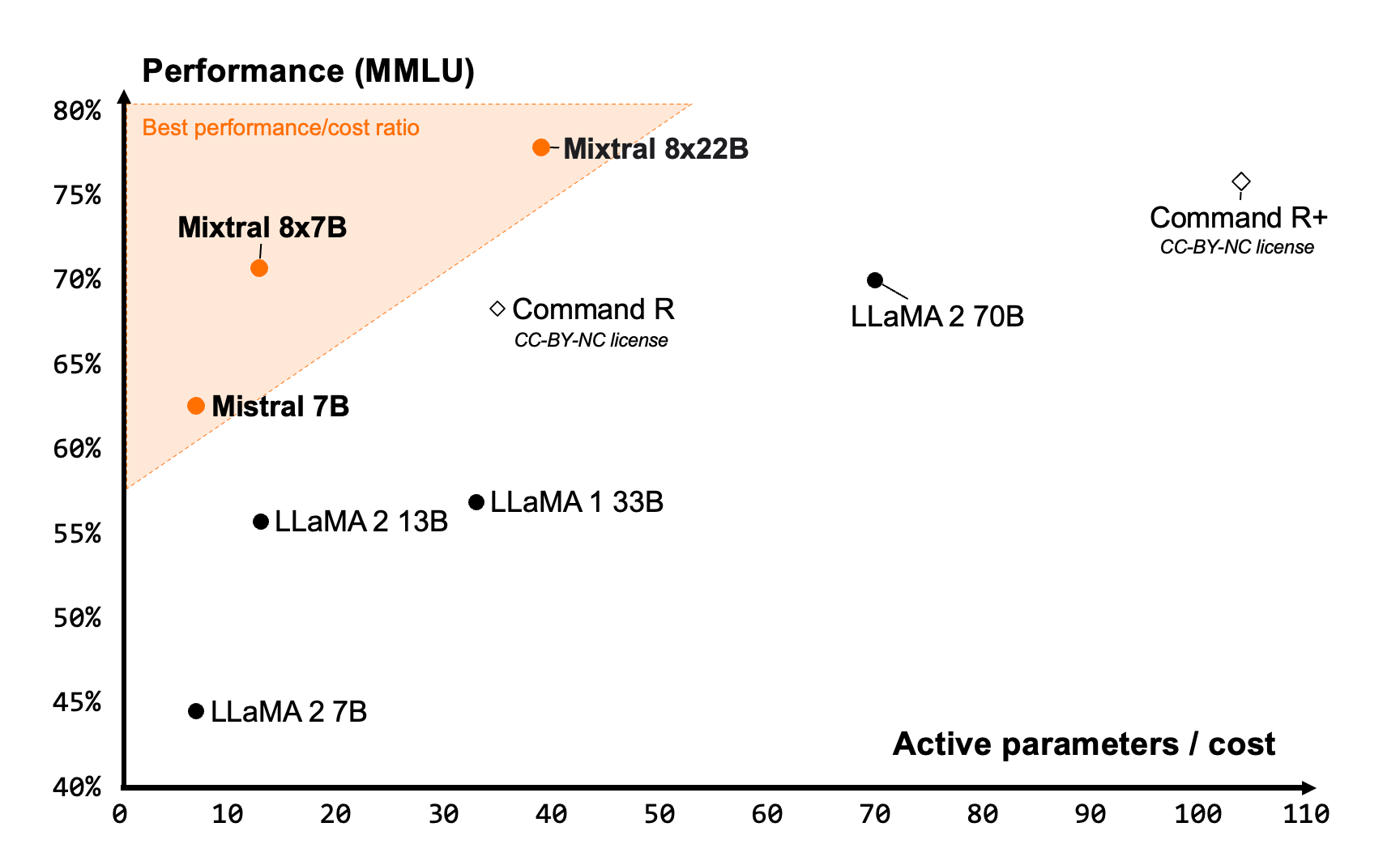

To highlight its efficiency, 8x22B is able to use only 39 billion of its total 141 billion parameters to operate smoothly and is based on the widely used mixture of experts (SMoE). According to the development team, this selective activation contributes to a highly favorable cost-performance balance relative to its size. Its predecessor, the Mixtral 8x7B, garnered positive acclaim from the open-source community.

Since the new Mixtral is capable of using a small number of its total parameters to operate, it is able to operate faster than traditional AI models that are densely trained on 70 billion parameters, while keeping a lead in performance compared to other open-source models.

Similar to its rival language models, 8x22B boasts proficiency in several languages including English, French, Italian, German, and Spanish. This is paired with robust programming and mathematical skills as well as native function calling for external uses. However, its context window, which refers to the maximum input allowed per prompt, is limited to 64,000 tokens, which falls short of some of its non-open source competitors such as GPT-4 (128K) or Claude 3 (200K).

The Mistral team has announced the release of Mixtral 8x22B, licensed under Apache 2.0, known for being the most liberal open-source license. This license grants unrestricted usage rights for the model.

As per industry standard benchmark results, 8x22B has a clear lead over other AI models in terms of popular comprehension, logic, and knowledge tests. This was tested on benchmarks including MMLU, HellaSwag, Wino Grande, Arc Challenge, TriviaQA, and NaturalQS.

It is also ahead of Meta’s Llama 2, which is one of the most widely used open-source models trained on 70 billion parameters. 8x22B was able to beat Llama 2 in supported languages such as French, German, Spanish, and Italian – on the HellaSwag, Arc Challenge, and MMLU benchmarks.

But Meta has just released Llama 3, which beats Gemini 1.5 in benchmarks and is also on par with other proprietary AI models, so Mistral’s 8x22B will have some heavy competition to deal with.